|

The `block_size` defines the size of the block, which is chosen for 60 business days. The `result` argument is the result of bootstrap sampling, which has the dimension of. It is the `ref` argument in the kernel function, which has the dimension of. It serves as a reference matrix where we sample the random blocks. Since stock prices are non-stationary, the stock price time series data is first converted to log returns. Result[sample_id, asset_id, position_id * block_size + If (position_id * block_size + loc + 1 < length): Position_id = % num_positionsįor k in range(i, block_size*assets, ): boot_strap(result, ref, block_size, num_positions, positions): Notice the decorator, which tells Numba to just-in-time compile the boot_strap kernel. Papenbrock and Schwendner (2015) found multi-asset correlation patterns to change at a typical frequency of a few months.įigure 2: Create synthetic market data by Boomstrap methodįollowing is the Numba kernel to sample blocks from the reference prices matrix. This block length is motivated by a typical monthly or quarterly rebalancing frequency of dynamic rule-based strategies and by the empirical market dynamics that happen on this time scale.

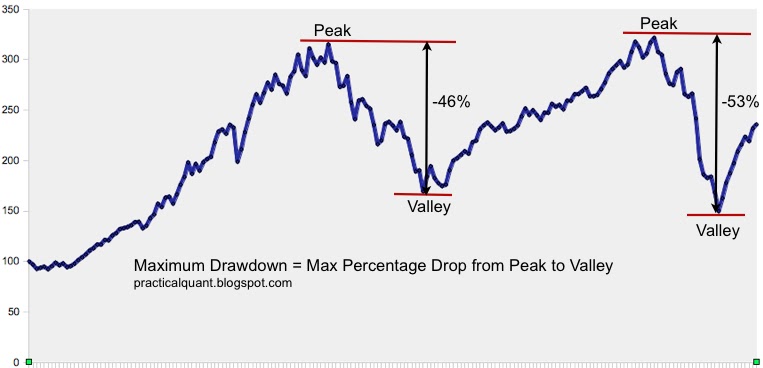

We choose to use a block of 60 business days. Each block has a fixed length, but a random starting point in time is defined from the futures return time-series. A new return time series is constructed by sampling the blocks with replacement to reconstruct a time series with the same length as the original time series. We will describe in detail how to perform block bootstrapping calculations in a Numba GPU kernel.īootstrapped datasets are used to account for the non-stationarity of time series’ future returns. The different scenarios are calculated using multiple GPU threads. Since the generated scenarios are independent from each other and large in number, a better method of parallelism should be at the scenario level. We might be able to vectorize a few steps of the HRP algorithm, however, the speed-up will be minimal because only a smaller number of parallel threads are used. Then, it distributes the allocation through recursive bisection based on the cluster covariance. It reorganizes the covariance matrix of the stock returns so similar investments are placed together. Some of the steps, like the HRP algorithm, are serial in nature. To accelerate them on the GPU, the most important thing that we need to identify is the granularity of the parallelism. There are quite a few steps involved in the preceding computation. Compute the Average annual Returns, STD Returns, Sharpe Ratios, Maximum Drawdown, and Calmar Ratio performance metrics for these two methods (HRP-NRP).įigure 1: Computation graph for the portfolio construction algorithm.At every rebalancing date, calculate the portfolio leverage to reach the volatility target.Compute the transaction cost based on weights adjustment on the rebalancing days.Compute the weights for the assets based on the Naïve Risk Parity (NRP) method.Compute assets distances to run hierarchical clustering and Hierarchical Risk Parity (HRP) weights for the assets.Compute the log returns for each scenario.Run block bootstrap to generate 100k different scenarios.As shown in the Figure 1, the use case in the previous blog includes following steps:Load csv data of asset daily prices It is one of the most important steps that a fund manager has to do to manage assets. The portfolio construction algorithm is used to calculate the optimal weights for constructing portfolios. It uses the same APIs and data structures so Python developers can pick it up easily. Dask integrates well with Python libraries like Numpy, pandas, cuDF, CuPy, etc. For larger problems that don’t fit in a single GPU, we use Dask for distributed computation in a cluster of GPUs. It makes writing CUDA more accessible to Python developers. The Python GPU kernels can be Just in Time (JIT) compiled to run on the GPU. Numba is a Python library that eases the implementation of GPU algorithms with Python. In this post, we will show how we used Numba and Dask to accelerate a portfolio construction algorithm by 800x as introduced in a previous blog. Developers must implement the algorithms from scratch to accelerate on the GPU. However, in certain domains like portfolio optimization, there are no Python libraries for easy acceleration of computational work.

For example, popular deep learning frameworks such as TensorFlow, and PyTorch help AI researchers to efficiently run experiments. Though Python is notoriously slow when the code is interpreted at runtime, many popular libraries make it run efficiently on GPUs for certain data science work. It ranks as the most popular computer language and is widely used for all kinds of tasks. Python is no stranger to data scientists.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed